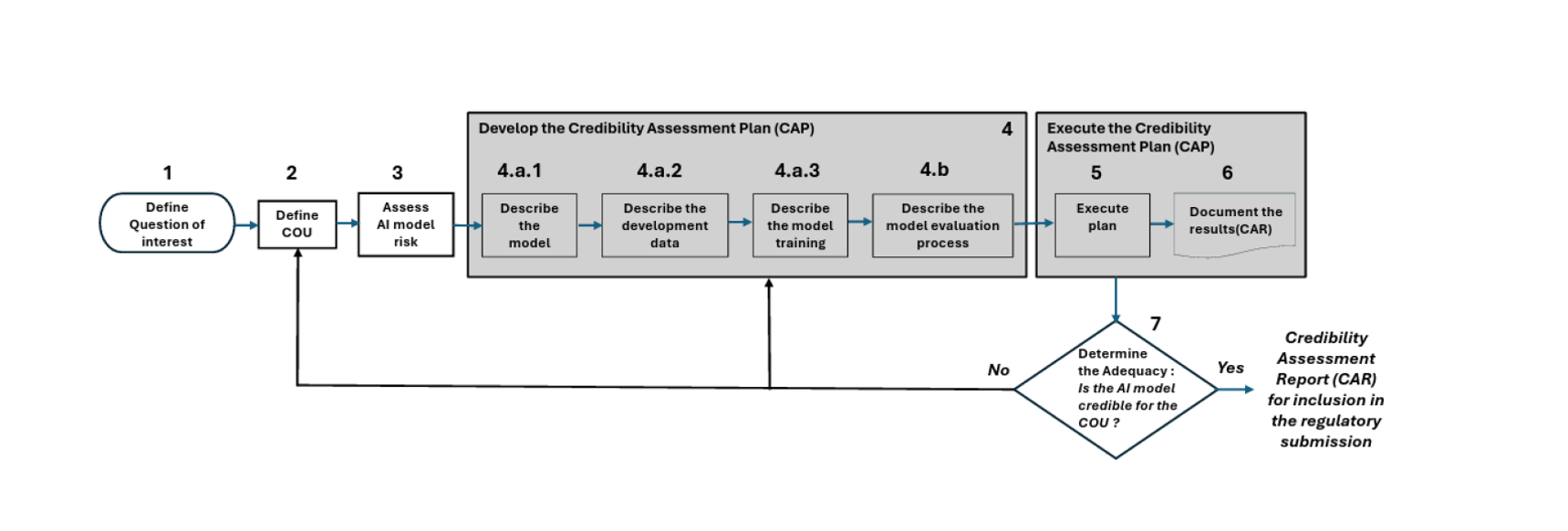

FDA’s 7-Step Credibility Assessment Framework for Use of AI

Artificial Intelligence (AI) is increasingly used across the drug product lifecycle – from development and manufacturing to quality monitoring and advanced analytics. The FDA draft guidance on Artificial Intelligence in the drug product lifecycle introduces a structured 7‑step credibility assessment framework to evaluate whether AI models are reliable and appropriate for regulatory use.

Figure 1. The 7-step Approach. Figure adopted from PDA (Parenteral Drug Association®) Comments to FDA’s Draft Guidance.

The table below summarizes the key steps and what regulatory professionals should consider when evaluating AI model credibility.

| Step | Framework Element | Purpose | Regulatory Considerations |

|---|---|---|---|

| 1 | Define Question of Interest | Identify the scientific or regulatory problem the AI model addresses. | Ensure the model objective directly supports a regulatory or development decision. |

| 2 | Define Context of Use (COU) | Describe how and where the AI model will be used. | Clarify intended users, environment, and regulatory impact. |

| 3 | Assess AI Model Risk | Evaluate the influence of the model and consequences of incorrect predictions. | Higher influence and higher decision consequences require stronger evidence. |

| 4 | Develop Credibility Assessment Plan (CAP) | Plan how the model will be validated and evaluated. | Document model design, training data, and validation approach. |

| 5 | Execute the CAP | Perform validation activities and test performance. | Assess robustness, reproducibility, and reliability of predictions. |

| 6 | Document Results (CAR) | Summarize validation results in a Credibility Assessment Report. | Provide transparent documentation for regulatory review. |

| 7 | Determine Adequacy for COU | Confirm whether the model is credible for its intended use. | Ensure evidence supports inclusion in regulatory submissions. |

Key Takeaways

• AI models used in regulatory contexts must demonstrate credibility through structured validation.

• The Context of Use (COU) determines the level of evidence required for regulatory acceptance.

• Risk‑based evaluation considers both model influence and the consequence of incorrect predictions.

• Clear documentation through the Credibility Assessment Plan (CAP) and Credibility Assessment Report (CAR) is essential.

• Early alignment with the framework helps organizations prepare AI‑supported regulatory submissions more efficiently.

Implementing this framework early in AI development helps ensure that AI‑generated insights are scientifically robust, transparent, and suitable for regulatory decision‑making.

How does GxP-CC support you?

We help life science companies navigate the complex intersection of cutting-edge technology and strict regulatory requirements. From bridging the gap between IT/Data Science and Quality Assurance to executing validation strategies, we provide clear, practical, and regulatory-aligned solutions.

With innovative training formats and proven validation templates, our experts ensure your AI/ML initiatives meet FDA, EMA, and ISPE expectations, keeping you inspection ready and future proof – we go with you every step of the way. Efficient, audit-ready, and individually tailored to your requirements.

If you would like to learn more or discuss your specific needs, feel free to contact us via our website.