A Look-back on Data Integrity in 2020 – Part 1

Data Integrity in 2020

The year 2020 was a game-changer in many aspects. It has forever altered the way we perceive reality down to every facet of our lives and how we implement our everyday activities. This stands true even more in the healthcare world, which has undergone a tremendous shift in a span of 10 months on a global scale. The world of regulatory affairs and compliance has also undergone some changes that has affected our views on data and our attitude towards data governance and data integrity (DI).

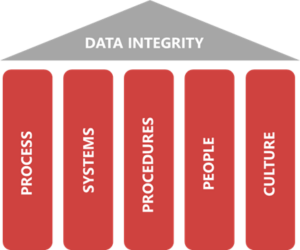

Over the course of last year, 2 major industry guidance documents pertaining to DI have been released. The “Data Integrity by Design” guidance, drafted by the International Society for Pharmaceutical Engineering (ISPE) is one of them, and it highlights the importance of data governance and knowledge management. The second guidance document is “PDA TR84”, released by the Parenteral Drug Association (PDA), which merges the realm of DI with manufacturing and packaging. Both documents do a great job at breaking down the different aspects of DI, such as QRM, records retention, and CSV and drive home the message that these components are the building blocks of DI in the current times.

The ISPE guidance exudes the notion that in order to unlock the full potential of data, it is important to perceive data and knowledge as an asset. A prime requirement to develop that perspective is to imbibe and practice quality culture from the highest level of management in the organization. This would allow the quality culture to trickle down to the rest of the organization, including the supporting and business processes. In this article, we will briefly look at how these guidance documents tackle some of the important topics in maintaining DI such as Quality Risk Management (QRM), data process flow maps and records retention.

Quality Risk Management

The foundation of the ISPE guidance on “Data Integrity by Design” is that the Data governance framework needs to be established alongside Quality Risk Management (QRM) and knowledge management (KM). Quality Risk Management is a process that requires timely risk identification and analysis which helps one to review and control the risk. Product quality, patient safety and data integrity are the holy trinity based on which efficient risk assessment is done. This ISPE guidance elaborates on how the process of holistic quality risk management requires a deep level of product and process understanding.

Human factors play an undeniable role as potential risks which need to be monitored. Root cause analysis is paramount to risk analysis (RA), which helps to discern between unintentional and intentional acts by personnel which potentially leads to a risk. The TR84 guidance provides a clear distinction between the two, along with methods on how to control and mitigate such risks based on the motive. Knowledge and process gaps are prime examples of unintentional acts which eventually lead to risks. Bridging the gap in knowledge by implementing effective training programs of staff and personnel can help reap benefits of having a tight RA structure in place. The improvement of processes and procedures by fine-tuning standard operating procedures can also go a long way in terms of identifying and rectifying process gaps. In the case of intentional acts of misconduct leading to risks, it is important that the highest level of management is stringent with the controls they have in place to recognize such looming threats. In addition, the management must work on being a role model to the rest of the organization by consciously centering the company’s values around patient safety and product quality.

Data Process Flow Maps

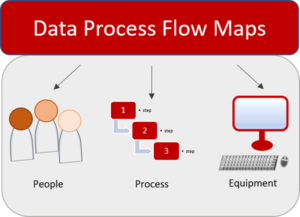

Data process maps are gaining increasing traction as an efficient way to identify risks and maintain DI throughout the data lifecycle. Mapping the data process flow aids us in identifying the rationale and risk associated with every step of the data flow. This approach also has a significant impact on the subsequent decisions which are made based on the procured data. The TR84 guidance rightly points out that this approach also motivates us to document our data more efficiently.

The data process flow map begins with the generation of data and includes all the devices and equipment that come into play during the entire data lifecycle. Documenting every single component as a part of the data flow map will help create a comprehensive system which may serve as a tool to troubleshoot errors, to project future effects, and reveal DI vulnerabilities in different parts of the data process flow. The TR84 guidance mentions that to identify the DI risks associated with the individual processes in the data flow map, we are required to employ our critical thinking skills. By asking the right questions pertaining to the different aspects of the data flow process i.e., people, processes, and equipment, we would be able to generate a data process flow map congruent with reality. These maps will provide the scaffolding to implement several data integrity controls along the way, which would depend on the criticality of the data.

Records Retention

Long-term retention of records is an important topic in today’s digitalized world. The ISPE GAMP (Good Automated Manufacturing Process) guide “Data Integrity by Design” suggests that a long-term retention strategy of records should be developed. It is crucial that this topic is considered during process and system design in order to effectively manage records management considering both system and data lifecycles. As electronic data are becoming increasingly prevalent, the inherent nature of data and records is also ever-evolving. A clear distinction between static and dynamic records, and their discrete retention methods, is required to prevent incomplete data retention. On the flip side, some records also exist which might be deemed obsolete in today’s DI landscape. It is therefore important that organizations are not continuing to stick to old-fashioned storage mechanisms simply to preserve outdated forms of records, as this can introduce new forms of DI risks. The ISPE guideline suggests that the ideal way to execute long-term records retention would be to document the data according to the business needs along with the associated risks and the subsequent plans to mitigate them right from the beginning of the data and system life cycle and revise the risks until decommissioning.

(To be continued…)